Lip Synchronisation in AI Video: How It Works and Why It Matters in 2026

Lip synchronisation powers realistic AI video. Learn how the technology works, where it's used today, and how to evaluate lip sync quality in AI tools.

Lip synchronisation powers realistic AI video. Learn how the technology works, where it's used today, and how to evaluate lip sync quality in AI tools.

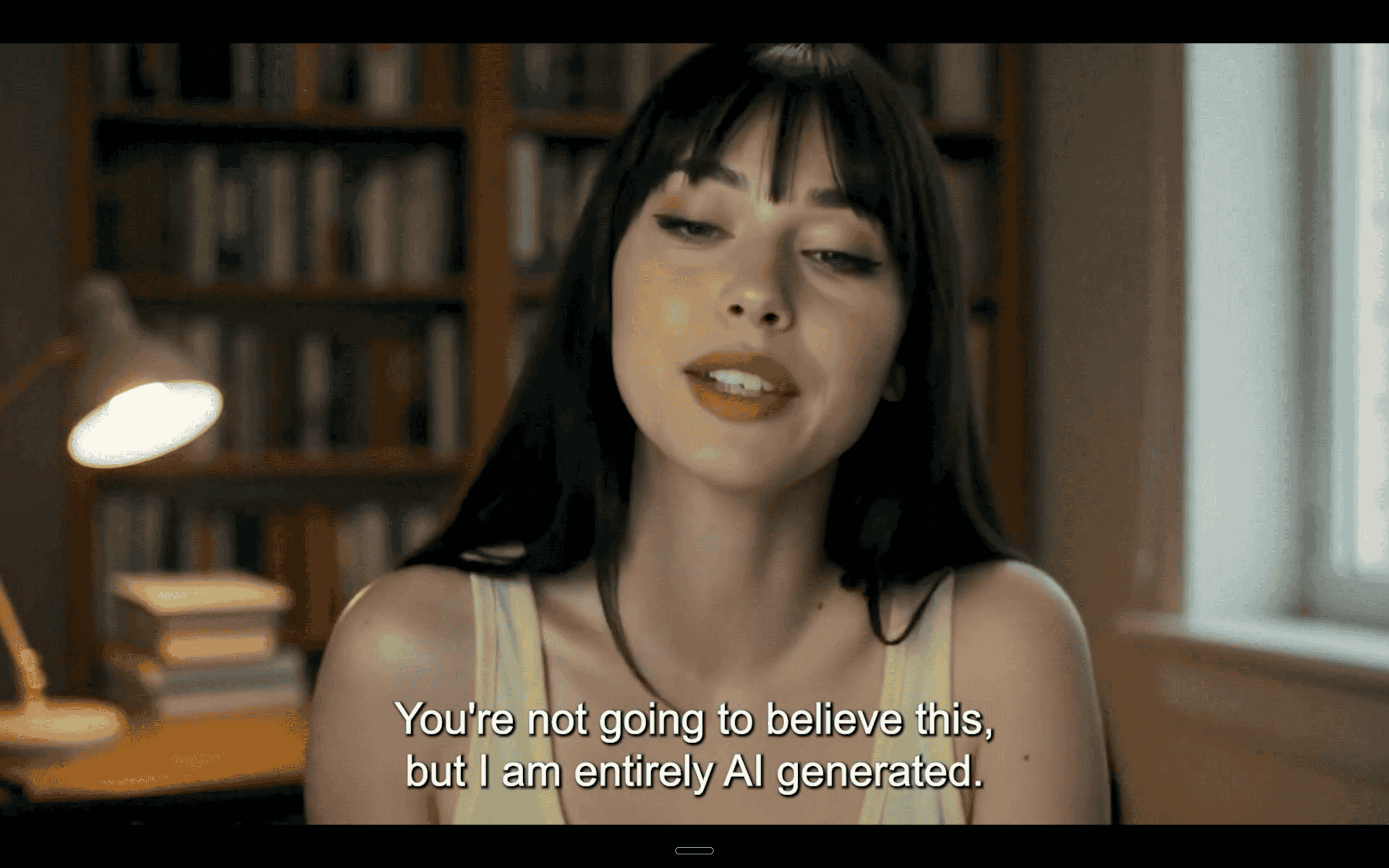

Lip synchronisation is the process of matching mouth movements to spoken audio so that what you see lines up with what you hear. It sounds simple. In practice, it's one of the hardest problems in AI video generation, and the single biggest factor that determines whether an AI-created video looks professional or fake.

For decades, lip sync in video was a manual process. Animators painstakingly matched mouth shapes to dialogue frames, and dubbing studios hired voice actors and editors to overlay new audio onto existing footage. The results were often good enough for cartoons or foreign films, but never fully convincing at close range.

AI has completely changed that. Modern lip synchronisation systems analyze audio, map facial landmarks, and generate mouth movements automatically. The technology has improved so fast that the AI video generator market, valued at roughly $788.5 million in 2025, is projected to reach $946.4 million in 2026. Lip sync quality is a big reason why. When the mouth moves right, viewers trust the video. When it doesn't, they click away.

This article breaks down how lip synchronisation actually works, where the technology stands today, and what to look for when choosing an AI video tool that gets it right.

Understanding lip synchronisation at a technical level helps you evaluate the tools that claim to do it well. The pipeline has 4 core stages, and each one matters.

Every lip sync system starts with audio. The AI breaks down speech into phonemes, the smallest units of sound in a language. The English word "cat" contains 3 phonemes: /k/, /ae/, /t/. Each phoneme corresponds to a specific mouth position.

But phonemes alone aren't enough. The system also analyzes timing, emphasis, and pitch. A shouted word shapes the mouth differently than a whispered one. The speed of speech affects how long each mouth position holds. Getting this analysis wrong means the entire downstream output will look off.

Once the audio is processed, the system needs to understand the face it's working with. AI models detect facial landmarks, which are specific points on the face like the corners of the mouth, the edges of the lips, the jawline, and the cheeks.

Modern systems track 68 to 468 facial landmarks depending on the model. More landmarks mean more precision, but also more computational cost. The landmark map creates a skeleton of the face that the AI can manipulate without distorting the rest of the image.

This is where the real work happens. The AI maps phonemes to visemes, which are the visual representations of sounds. While /b/ and /p/ sound different, they look nearly identical on a person's face because both involve the lips pressing together and then releasing. The English language has roughly 40 phonemes but only about 14 distinct visemes.

Neural networks, particularly generative adversarial networks (GANs) and diffusion models, generate the actual pixel-level changes to the mouth region. The model has to handle teeth visibility, tongue position, lip thickness variations, and the skin texture around the mouth.

Wav2Lip, published at ACM Multimedia 2020 by researchers at IIIT Hyderabad, was a turning point here. It introduced a pre-trained lip-sync expert discriminator that evaluates whether generated lip movements actually match the audio, not just whether they look realistic. That distinction matters. A mouth can look perfectly natural while saying the wrong thing.

3 challenges separate average lip synchronisation from convincing lip synchronisation.

Multiple languages create the 1st hurdle. Mandarin uses tonal variations that shape the mouth differently than English. Arabic has pharyngeal sounds with no English equivalent. A system trained primarily on English data will struggle with these languages.

Emotional expression is the 2nd challenge. An angry sentence and a calm sentence with identical words require different mouth movements because the facial muscles around the mouth contract differently: the jaw clenches and the lips tighten. Systems that ignore emotion produce technically accurate but emotionally flat results.

Head movement is the 3rd. Real people don't hold their heads perfectly still while talking. They nod, tilt, and turn. The lip synchronisation system needs to maintain accurate mouth-to-audio alignment even as the face rotates in 3D space. Early systems required near-static head positions. Current models handle moderate movement, though extreme angles still cause artifacts.

Real-time lip synchronisation processes audio and generates mouth movements on the fly, with latency measured in milliseconds. This is necessary for live video calls, virtual assistants, and interactive gaming characters.

Pre-rendered lip sync takes longer but produces higher quality output. The system can run multiple passes, refine details to correct errors. Most AI video creation tools, including those used for content marketing and personal branding, use pre-rendered processing because quality matters more than speed.

The progress in lip synchronisation over the past 6 years follows a clear trajectory from visibly artificial to nearly indistinguishable from real footage.

The early era was defined by Wav2Lip and its derivatives. These models could match mouth movements to audio with reasonable timing, but the visual quality was limited. Outputs had blurry mouth regions, inconsistent lighting between the generated mouth and the rest of the face, and teeth that looked like white smudges rather than individual shapes.

Videos from this period were useful for proof-of-concept demos and internal content, but not for anything public-facing. The "uncanny valley" effect was strong. Viewers couldn't always identify what was wrong, but they could feel it.

2 things changed during this period. First, diffusion models replaced or supplemented GANs for face generation, producing sharper and more consistent outputs. Second, training datasets grew massively. Models trained on millions of hours of video learned subtleties that smaller datasets couldn't capture: how lips stretch differently over different teeth structures, how skin folds near the mouth change with age, how facial hair interacts with lip movements.

By late 2024, the best systems could produce lip sync that passed casual inspection. Marketing teams started using AI-generated talking head videos for social media. The quality wasn't perfect, but it was good enough for content consumed on mobile screens at reduced resolution.

The gap between AI lip sync and real footage has narrowed dramatically. Venture capital investment in AI video startups reached $4.7 billion in 2025, a 189% increase from 2023. Much of that investment targeted lip sync and avatar quality improvements.

What makes modern lip synchronisation convincing comes down to 3 details that earlier systems got wrong:

1. Teeth rendering: Real teeth have translucency, individual shapes, and reflections that change with lighting and angle. Current models render individual teeth with realistic shading rather than the white blur of earlier generations.

2. Jaw movement: The lower jaw doesn't just open and close. It shifts laterally, protrudes slightly on certain sounds, and moves at different speeds for different phonemes. Modern models capture this full range of jaw articulation.

3. Micro-expressions: The muscles around the mouth don't operate in isolation. When someone smiles while talking, the cheeks lift, the eyes narrow slightly, and the nasolabial folds deepen.

Current lip synchronisation systems adjust these surrounding facial features to match the emotional tone of the speech, creating a coherent expression rather than a mouth pasted onto a static face.

Lip synchronisation technology has moved well beyond research labs. Here are 5 areas where it's creating real business value.

This is the fastest-growing use case. Creators and professionals record a short training video of themselves, and an AI generates new videos of their clone speaking any script. The lip synchronisation quality determines whether these videos are usable for professional content.

Platforms like Argil let users upload a 2-minute video and get an AI clone that generates fully edited short-form videos from scripts. The lip sync is what makes these clones look like the actual person rather than a digital puppet. For personal brand builders posting daily on LinkedIn or YouTube Shorts, this means consistent video output without filming every day.

The global dubbing market was valued at $4.8 billion in 2025 and is projected to reach $10.2 billion by 2034. Traditionally, dubbed content had a visible disconnect between the actor's mouth movements and the new audio track. AI lip synchronisation can now adjust the actor's mouth to match the dubbed audio, making foreign-language versions look native.

Studios use this for localization at scale. Instead of re-shooting scenes or accepting mismatched lip movements, AI adjusts the original footage. The result is more natural-looking content across languages with faster turnaround and lower cost.

Companies use AI presenters across onboarding, product demos, internal training, and sales outreach. These virtual presenters need convincing lip synchronisation to hold viewer attention. A training video with poorly synced audio loses credibility fast.

The advantage here is update speed. When a product feature changes, the company regenerates the video with a new script rather than re-filming. Good lip sync makes these updates seamless.

Game studios use lip sync technology for non-player character (NPC) dialogue. Rather than hand-animating mouth movements for thousands of lines of dialogue, AI generates them from the voice recordings. This cuts production time significantly while maintaining quality that players expect from modern titles.

In VFX, lip sync helps create digital doubles of actors for stunts, de-aging sequences, or posthumous performances. The technology needs to be photorealistic at cinema resolution, which pushes the quality bar higher than any other use case.

AI lip sync powers tools that generate sign language avatars and visual speech aids for hearing-impaired users.

When combined with text-to-speech systems, lip sync creates visual representations of spoken content that help with lip-reading comprehension. This is an underserved area with significant growth potential as accessibility requirements expand globally.

Not all lip synchronisation is equal. When comparing AI video platforms, 3 quality markers tell you what you need to know.

Play any AI-generated talking head video and watch only the mouth for 2 seconds. If anything feels off, even if you can't articulate what, the lip sync isn't good enough. Human perception of facial movement is extraordinarily sensitive. We evolved to read faces. A delay of even 50 milliseconds between audio and visual cues triggers a subconscious discomfort response.

Look for these when evaluating tools:

Poor lip sync doesn't just look bad. It actively damages trust. Viewers who notice mismatched lip movements are more likely to question the content's authenticity and disengage. For personal branding and thought leadership content, where trust is the entire point, lip synchronisation quality is non-negotiable.

Several platforms offer lip sync capabilities, but they differ in approach, quality, and pricing.

Argil is an AI video creation platform built around the AI clone workflow. Upload a 2-minute video, and it generates a custom avatar with your appearance, voice, and mannerisms. The platform includes a full editing pipeline with captions, b-rolls, and transitions built in, so the output is a finished video, not a raw talking head that needs post-production.

Argil's lip sync is designed specifically for professional personal branding, where the bar for quality is high because viewers know what the real person looks like. The platform supports A/B testing hooks, avatars, and languages from a single script. Pricing starts at $39/month for the Basic plan (3 custom avatars, 25 video minutes) and $149/month for Pro (10 avatars, 100 video minutes).

HeyGen offers AI avatar videos with support for 175+ languages. It's strong on multilingual dubbing and has a large library of stock avatars. Pricing starts at $29/month. Lip sync quality is good on English content but can vary on tonal languages based on user reports.

Synthesia focuses on enterprise and corporate training use cases. It offers 160+ languages and a library of professional-looking avatars. The Starter plan begins at $29/month. Its lip synchronisation is optimized for formal, teleprompter-style delivery rather than conversational content.

D-ID specializes in animating still photos into talking videos. If you have a headshot and want it to speak, D-ID handles that workflow well. Plans start around $4.70/month for the Lite package. The lip sync works best for short clips and simple presentations rather than long-form content.

Captions offers AI video editing with lip synchronisation capabilities, particularly for short-form social content. It targets creators who want quick edits with synced audio overlays rather than full clone generation. Pricing starts at $9.99/month.

Lip synchronisation is the process of matching mouth movements to audio so that what someone appears to say visually matches what you hear. In AI video, this means software generates realistic mouth movements from an audio track or script, making it look like a person is naturally speaking.

Modern AI lip sync is accurate enough for professional use in most scenarios. The best tools produce results that pass casual viewing on social media and mobile devices. At cinema-quality resolution or with extreme close-ups, trained eyes can still spot minor artefacts, but the gap between AI and real footage continues to shrink.

Yes, though quality varies by language and platform. English lip sync is the most refined across all tools. Languages with similar mouth shapes to English (Spanish, French, German) perform well. Tonal languages like Mandarin and languages with unique phonemes (Arabic, Hindi) can produce lower quality results depending on the tool's training data.

Traditional dubbing replaces the audio track on a video without changing the visual mouth movements, which creates a visible mismatch. AI lip synchronisation goes further by actually modifying the speaker's mouth movements to match the new audio, making the dubbed version look like native speech.

Real-time lip sync exists but is primarily used in gaming, virtual assistants, and video conferencing tools. Most AI video creation platforms use pre-rendered lip sync, which takes longer but produces higher quality results. For content creation and personal branding, pre-rendered is the standard.

Run the 2-second test: watch only the mouth on a sample video. Check if lips close fully on /b/ and /m/ sounds, whether teeth look realistic, and if the jaw moves naturally. Test with your own face or voice if possible, since lip sync quality can vary between different face shapes and speaking styles. Platforms like Argil let you create a custom clone from your own footage, so you can evaluate quality with your actual appearance.

Understanding lip synchronisation technology for AI-generated video content