Sketch to Video AI: The 6 Best Tools for Turning Drawings Into Videos in 2026

Sketch to video AI tools turn drawings and storyboards into finished videos. Compare the 6 best options, from image animators to script-to-video platforms.

Sketch to video AI tools turn drawings and storyboards into finished videos. Compare the 6 best options, from image animators to script-to-video platforms.

Sketch to video AI has become one of the fastest-growing categories in the modern creator’s tool stack. The idea is simple: take a static image, a hand-drawn sketch, or a storyboard frame and let AI turn it into a moving video. For anyone wanting to turn their art into videos or Shorts to share online, this technology is revolutionary.

However, not every sketch to video AI tool is equal. Some tools animate existing images, others convert storyboard sequences into full scenes, and a newer class of tools skips the sketch entirely, going straight from a written script to a finished video with a real human presenter. Knowing which of these approaches and platforms is right for you could mean the difference between shaving hours off your production timeline and wasting them.

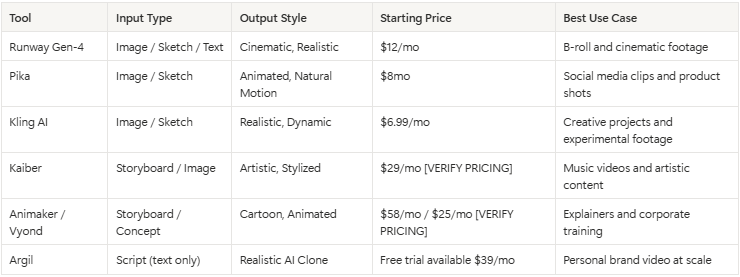

This guide breaks down the six best tools for every approach, compares them side by side, and helps you figure out which workflow actually makes sense for what you are building.

The term “sketch to video” AI refers to any tool that takes a static visual input (a pencil sketch, a digital illustration, a photograph, or a storyboard frame) and generates video from it using artificial intelligence. The AI handles motion, transitions, camera movement, and sometimes even audio.

The main benefit of these tools is faster production speed. Traditional video production requires filming, editing, color grading, and sound design. Even a 30-second clip can take hours. Sketch to video AI tools compress that pipeline by removing the production middle layer. You go from concept to output without a camera, a studio, or a timeline full of keyframes.

The possibilities are practically endless in terms of what these tools can actually do. On one end, you have simple animation tools that add subtle motion to a still image (a sketch of a landscape gets gentle wind in the trees, for example). On the other end, you have full AI video generation, where a rough storyboard frame becomes a photorealistic scene with characters, lighting, and physics.

According to Grand View Research, the AI video generation market reached $1.1 billion in 2025 and is projected to grow at a 19.2% CAGR through 2030. That growth is being driven by creators and businesses who need video output at a pace that traditional production cannot match.

These tools take a single image, sketch, or photograph and generate a short video clip from it. They work best when you already have a strong visual and want to add motion.

.png)

Runway has been one of the defining tools in AI video since Gen-1 launched in 2023. Gen-4, released in early 2026, takes image-to-video generation to a new level of coherence. You upload a sketch, illustration, or photo, and Runway generates a video clip with camera movement, lighting changes, and natural motion applied to the scene.

The output quality is strong, particularly for cinematic b-roll. If you need an establishing shot, a product reveal, or atmospheric footage for a brand video, Runway handles it well. The tool also supports text-to-video prompts, so you can describe what you want and get a generated clip without any visual input at all.

Where Runway falls short is human subjects. There is no avatar system, no lip sync, and no way to generate a video of a person speaking. It excels at scenes, objects, and abstract motion. For content creators who need to appear in their own videos, Runway produces supporting footage but not the main content. Pricing starts at $12/ month for the Standard plan, which includes 625 credits. Heavy users burn through credits quickly.

Best for: Filmmakers, brand teams, and editors who need AI-generated b-roll and cinematic clips from concept art or storyboards.

.png)

Pika carved out its niche by making image animation feel natural rather than artificial. Upload a sketch or photo, and Pika adds motion that respects the physics of the scene. Hair moves, water flows, fabric shifts. The effect is closer to a cinemagraph than a full video, but for social media, that subtlety works.

Pika 2.0 introduced better control over motion direction and intensity, which makes it more useful for product shots and social content. You can take a product sketch, animate it with a slow rotation or a reveal effect, and have a social-ready clip in under a minute. According to Pika's own metrics, users generated over 100 million videos on the platform in 2025!

The limitations are real, though. Clip length tops out at around 10 seconds. There is no avatar or clone system, so you cannot generate a video of a person talking. And while the motion quality is good, it lacks the cinematic depth of Runway for longer-form content. Pricing starts with a free tier with limited generations and paid plans scale up from $8/month.

Best for: Social media managers, e-commerce brands, and creators who want quick animated clips from product images or illustrations.

.png)

Kling AI, developed by Kuaishou, made waves in 2025 with image-to-video results that rivaled Runway at a fraction of the cost. The tool generates video from a single image with strong motion quality, particularly for dynamic scenes with movement, action, or environmental effects.

What sets Kling apart is its willingness to push motion further than competitors. Where Pika adds subtle shifts, Kling generates full scene dynamics. A sketch of a city street becomes a video with moving cars, pedestrians, and changing light. A product sketch becomes a rotating showcase with depth and shadow. The model handles complex scenes better than most alternatives in this price range.

The tradeoff is predictability. Kling's outputs can vary significantly between generations. You might get a stunning result on the first try or spend several attempts getting the motion you actually wanted. There is no human presenter capability, and the editing controls are less refined than Runway's interface. Free tier access is available with limited daily generations, and paid plans start at $6.99/month.

Best for: Creative professionals and indie filmmakers experimenting with AI-generated footage who are comfortable iterating on outputs.

These tools are built for sequences rather than single frames. You provide multiple storyboard panels, and the tool generates a continuous video that follows the narrative arc.

.png)

Kaiber occupies a unique space in sketch to video AI. Rather than targeting photorealism, it leans into artistic transformation. Upload a series of storyboard frames or sketches, and Kaiber generates animated sequences with stylistic flair. Think watercolor motion, ink-wash animation, or oil-painting effects applied to your visual narrative.

This makes Kaiber a strong fit for music videos, artistic short films, and brand content that prioritizes visual style over realism. Several independent musicians have used Kaiber to produce full music videos from hand-drawn storyboards at a fraction of traditional animation costs. The tool supports multiple art styles and lets you control the degree of transformation from your original input.

The limitation is that Kaiber is style-first. If you need realistic human presenters, product demonstrations, or talking-head content, Kaiber is not the tool. It produces artistic output, and that is both its strength and its boundary. Pricing starts at $29/month for the Creator plan, and pay-as-you-go plans are also available.

Best for: Musicians, artists, and creative directors producing stylized video content from hand-drawn storyboards or concept art.

.png)

Animaker and Vyond represent the more traditional end of the sketch-to-video spectrum. These tools convert rough concepts and storyboard sketches into polished animated explainer videos, corporate training content, and presentation-style clips. They are template-driven, which means the output is consistent and professional but follows a recognizable animated aesthetic.

Vyond, in particular, has found a strong foothold in enterprise settings. According to Vyond's 2025 annual report, the platform is used by over 12,000 companies globally for internal communications and training content. You sketch out your storyboard, select characters and scenes from the library, and the tool builds the video for you with drag-and-drop simplicity.

The obvious limitation is the cartoon look. These tools produce animated content, not realistic video. For brands that need a polished, human, camera-quality feel, Animaker and Vyond are not the answer. They are best suited for contexts where clarity of message matters more than production style. Vyond pricing starts at a more expensive $58/month for individuals, billed annually. Animaker offers a free tier with paid plans from $25/month.

Best for: Corporate teams, educators, and SaaS companies creating explainer and training videos from storyboard concepts.

The tools above all start with a visual input: a sketch, an image, a storyboard. Argil does something completely different.

Argil is an AI video creation platform built around a simple premise. You record a 2-minute training video of yourself. Argil builds an AI clone of your face, voice, and mannerisms. From that point forward, you write a script, and Argil generates a fully edited short-form video of your clone delivering it, complete with lip sync, captions, b-roll cuts, and transitions.

This is a fundamentally different workflow from sketch to video. Instead of spending time drawing storyboards, creating visual assets, and then feeding them to an AI tool, you write what you want to say and get a finished video back. The production barrier that sketch-to-video tools reduce, Argil removes entirely.

The results feel closer to a real video than anything generated from a sketch. Because Argil works from a real recording of you, the clone preserves your expressions, your timing, and the way you actually move when you talk.

According to a recent Wyzowl survey, 89% of consumers say they want to see more video content from brands. This proves that the challenge has never been demand for video. It has been the cost and time of producing it. Argil collapses that cost to the time it takes to write a script.

For content creators building a personal brand, the appeal is immediate. You can go from one video per week to five per day without touching a camera after the initial training recording. Real estate agents can create property walkthroughs narrated by their own face. Lawyers can produce educational content at scale. SMBs that never had the budget for video production now have a viable path to consistent video output.

The lip sync technology behind Argil is worth understanding. The clone does not paste your face onto a generic avatar. It reconstructs your facial movements frame by frame based on the audio from your script. The result is natural-looking speech that matches your cadence, not the uncanny-valley output that plagued earlier avatar tools. You can also fully customize your AI clone's appearance and mannerisms to match different content formats.

Where Argil sits relative to sketch-to-video tools is clear. If you need artistic animation from hand-drawn frames, use Kaiber. If you need cinematic b-roll from concept art, use Runway. But if you need to produce content where you appear on screen, talking to your audience, at a pace that matches how fast you can write, Argil is the tool that eliminates the bottleneck. For a broader view of where AI video generation is headed, the shift from visual-input tools to script-input tools is the defining trend of 2026.

Best for: Content creators, personal brand builders, real estate agents, lawyers, and SMBs who need video of themselves at scale without filming.

When comparing sketch to video vs script to video, the answer depends on what you are making and who it is for.

Sketch to video makes sense when the visual concept itself is the point. If you are directing a music video with a specific aesthetic, storyboarding a commercial with precise camera angles, or creating concept art previews for a client pitch, then starting from a visual input gives you creative control that text prompts alone cannot replicate. The sketch is doing real work in the process, not just adding a step.

For most content creators, though, the bottleneck is production time, rather than visualization. You know what you want to say. You know how you want to show up on screen. What stops you from publishing five videos a week instead of one is the time it takes to film, edit, add captions, cut in b-roll, and export. Sketching a storyboard before handing it to an AI tool adds a step that script-to-video tools have made unnecessary.

The efficiency argument is straightforward. Sketch-to-video workflows have a minimum of three stages: create the visual input, feed it to the AI tool, and edit the output. Script-to-video compresses this to two: write the script, generate the video. When you are producing content at volume, that one fewer stage compounds into hours saved per week.

There is also the question of output type. Sketch-to-video tools produce footage without a human face. That works for b-roll, product visualization, and artistic content. But 91% of businesses now use video as a marketing tool, and the fastest-growing format is short-form content featuring a real person talking directly to the audience. For that format, sketch-to-video tools are the wrong starting point. You need a tool that puts you in the video, and that means either filming yourself or using an AI clone.

The practical takeaway: use sketch-to-video when the visual is the product. Use script-to-video when the message is the product. For anyone creating video content without wanting to be on camera every time, the script-to-video path is almost always faster.

Getting quality output from any AI video tool is about input quality and iteration. A few principles apply across the board.

Start with clean inputs. If you are using sketch-to-video tools, higher-contrast sketches with clear lines produce better results than rough pencil scribbles. Digital sketches outperform photos of paper drawings because the AI has less noise to interpret. For tools like Runway and Pika, the resolution of your input image directly affects the output quality.

Write detailed prompts when the tool supports them. Most image-to-video tools accept a text prompt alongside the image input. Describing the motion you want, such as "slow camera pan right, soft wind effect on foliage, warm afternoon lighting," gives the AI more to work with than uploading an image and hoping for the best.

Expect to iterate. First-generation outputs are rarely final. Run two or three generations with the same input and pick the best result, or adjust your prompt between runs. The tools are probabilistic, not deterministic. Variation between outputs is normal and can work in your favor.

For script-to-video tools like Argil, the equivalent of input quality is script quality. A well-written script with clear pacing, natural pauses, and conversational tone produces a clone video that looks and sounds like a real recording. Writing the way you actually talk, rather than the way you write, makes a noticeable difference in how natural the output feels.

Match your tool to your output format. Do not use an artistic animation tool when you need a talking-head video. Do not use a script-to-video tool when you need cinematic b-roll. The best results come from using each tool for what it was designed to do, not from trying to force one tool to cover every use case.

Yes, but with caveats. Tools like Runway Gen-4 and Kling AI can take a hand-drawn sketch and generate a realistic video clip from it. The AI interprets the shapes, lines, and composition of your sketch and generates a photorealistic scene with motion.

The output quality depends heavily on the clarity of the sketch and the prompt you provide alongside it. Simple compositions with clear subjects produce the best results. Complex multi-character scenes can confuse the model.

Pika and Kling AI both offer free tiers with limited daily generations. Pika gives you a small number of free video generations per day, and Kling provides daily credits for free users. Neither free tier supports high-volume production, but they are useful for testing whether the tool fits your workflow before committing to a paid plan. Argil also offers a free trial for script-to-video generation.

Most sketch-to-video tools generate clips between 4 and 10 seconds per generation. Runway Gen-4 supports up to 10-second clips per generation. Pika and Kling produce clips of similar length.

You can extend output by stitching multiple generated clips together, but there is no single sketch-to-video tool that produces multi-minute videos from one input. Script-to-video tools like Argil can generate longer outputs because the script itself provides the temporal structure that keeps the video coherent across minutes rather than seconds.

No. While better sketches produce better results, most tools also accept photographs, screenshots, or AI-generated images as input. You can use a text-to-image tool to create your starting visual and then feed it into a video generation tool.

Script-to-video tools bypass the visual input entirely, so artistic ability is not a factor at all. The barrier to entry for AI video creation is lower than it has ever been.

No. Image-to-video tools like Runway and Pika can animate a photograph of a person, adding subtle motion like hair movement or a head turn. But generating a video of a specific real person speaking requires either deepfake technology, which raises ethical and legal concerns, or an AI clone platform like Argil where the person has consented and provided training data. The distinction matters. Argil only creates clones from your own recording, which keeps the process consent-based and commercially viable.

Sketch to video starts with a visual input, a drawing, image, or storyboard, and generates video from it. Text to video starts with a written description and generates video without any visual input.

Some tools, like Runway, support both. The key difference is creative control: sketch-to-video gives you more influence over the composition and framing of the output because you are providing the visual reference. Text-to-video is faster when you do not have a specific visual in mind and want the AI to handle both the imagery and the motion.

Best sketch to video AI tools for content creators in 2026